Apologies to all who came here earlier. I mistakenly published this yesterday before it was finished.

I don’t read denialist blogs very often. Life is too short. And there are more interesting scientific and policy problems to grapple with than trying to figure out where some guy on Watt’s Up With That, with no background in climate science, gets his facts and reasoning all wrong.

Luckily, dipping into Sou’s excellent blog, Hot Whopper, saves me a lot of time and keeps me up to date on all the craziness. A recent article of Sou’s: Desperate Deniers Part 2: David Middleton fakes satellite data “Just for grins” does an excellent job of debunking a WUWT post by David Middleton (archived here). Sou mainly focuses on a graph that Middleton drew comparing contiguous US land surface temperatures with global lower troposphere temperatures. Fish in a barrel.

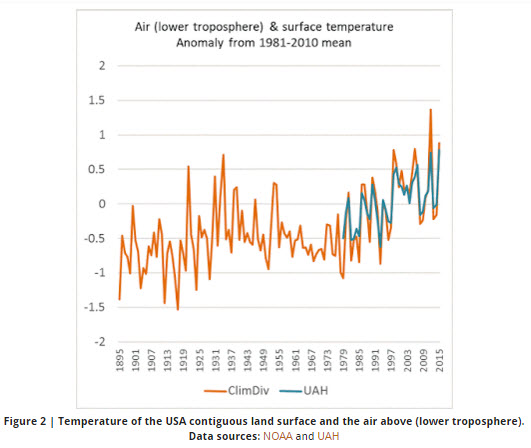

Sou does show that NOAA’s corrected annual land surface temperature anomalies for the CONUS actually compare quite nicely with the lower troposphere data above the same region as prepared by the University of Alabama Huntsville. Thus:

What caught my eye about David Middleton’s post was this:

I’m not saying that I know the adjustments [to the NOAA data] are wrong; however anytime that an anomaly is entirely due to data adjustments, it raises a red flag with me. In my line of work, oil & gas exploration, we often have to homogenize seismic surveys which were shot and processed with different parameters. This was particularly true in the “good old days” before 3d became the norm. The mistie corrections could often be substantial. However, if someone came to me with a prospect and the height of the structural closure wasn’t substantially larger than the mistie corrections used to “close the loop,” I would pass on that prospect.

I have the same professional background as Middleton and have encountered similar problems throughout my career. Indeed if you have to fudge-correct the ties of seismic data to an extent greater than the magnitude of the anomalies, then don’t drill them. Reprocess the data or persuade some other company to drill them for you.

But the analogy is flawed, you may be surprised to hear. Misties between seismic lines can arise from any number of sources related to processing differences, or even acquisition errors, like getting the surveying wrong. Most commonly, though, the misties arise from statics corrections. These are the kinds of misties that you most commonly try to correct by “closing the loop”, simply by shifting one profile up or down against another until they match.

Datum and statics corrections are done to correct for variations in topography and in the near-surface low-velocity layers. If you didn’t do them, you might well end up with a fake syncline under every hill and an anticline under every valley. There’s a good primer on them here. Like correcting the land temperature record for things like time of observation, instrument changes and weather station moves, statics corrections are basic and necessary for mitigating fake anomalies, but it is not always possible to do them perfectly consistently.

Mistie corrections of the kind Middleton is talking about are mostly (but not exclusively) due to differences in statics corrections applied at the same point. In other words, if you want to make an analogy between temperature and seismic data, the corrections (Tobs, homogenization; datum, statics) is a reasonable comparison, whereas the seismic “misties” observed between intersecting 2d lines are more like the differences observed between differently processed temperature datasets. For example, lets compare the NCDC processing with the Berkeley Earth processing, which used very different methodologies to correct the temperature record:

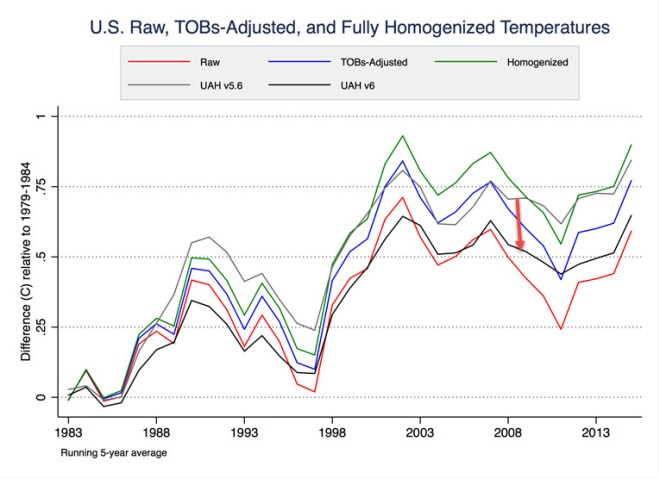

Here, the “mistie” is the gap between the red and blue lines. Indeed, it would be unwise to question the reality of any anomaly smaller than the “misties” between different versions. But to reject all anomaly variations that are smaller than the corrections (dashed line to the solid lines) is an error.

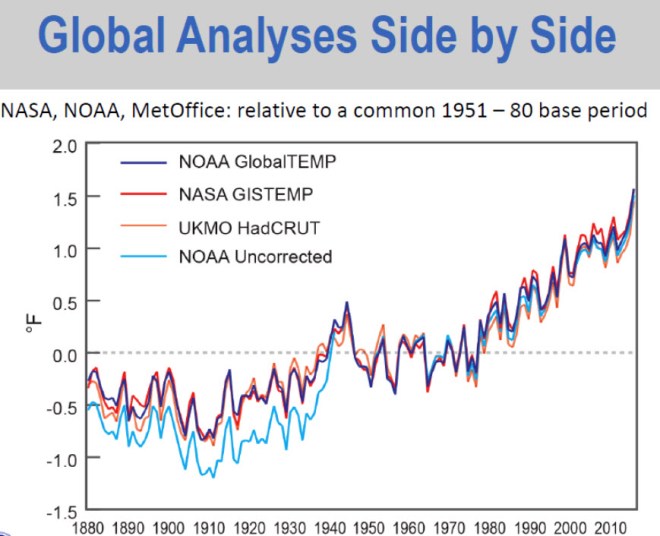

The plot for global temperature values, comparing NOAA, NASA and UK Met Office data, shows even smaller “misties” and very different corrections.

Note that the pre-1940 correction is the opposite sign to the CONUS (land-only) data, because the dominant correction here is in measuring sea-surface temperatures. There was a mid-century switch from using buckets (which measured cool) to engine-intake water (which measured warm).

Satellite measurements

Zeke Hausfather provided a graph on WUWT comparing the NOAA CONUS data with two versions of the UAH TLT satellite temperatures.

Courtesy Zeke Hausfather. I added the red arrow to show the UAH5.6 to UAH6.0 adjustment.

Note that the recent (and so far, not peer-reviewed) adjustment to the satellite data is as large as the Tobs or homogenization corrections to the thermometer data.

Middleton wrote:

I think I can see why the so-called consensus has become so obsessed recently with destroying the credibility of the satellite data.

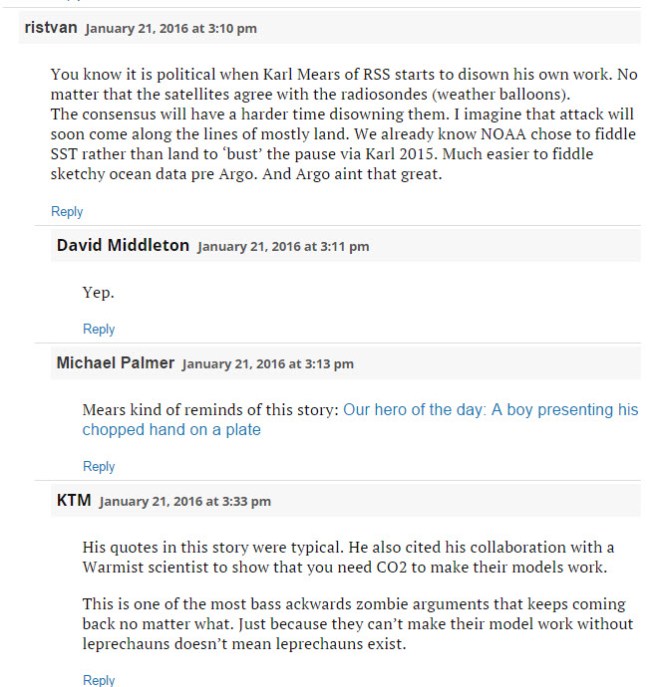

and commenters on the WUWT post added:

Note the conspiracy ideation.

The recent flurry of articles on the accuracy of satellite data (e.g., Tamino, Eli Rabett, Kevin Cowtan, Peter Sinclair) have, in part, been in response to the recent senate hearings instigated by Senator Ted Cruz in which satellite data were touted as “the best we’ve got”.

But, as Tamino points out, the various measurements of temperatures in the lower troposphere have been lately drifting apart.

The “UAH” shown is version 5.6. Version 6.0 is reportedly closer to RSS.

Here is a graph (hat-tip Tom Curtis) that shows an intermediate stage in processing satellite data from Po-Chedley et al 2013):

The top panel shows not quite raw data, but it does illustrate the challenge faced by processors of satellite data. It’s not surprising that the methodologies and models used have to be frequently updated.

If the satellite measurements are to be touted as a gold standard, which one do we pick RSS, UAH5.6 or 6.0?

The difference is that Spencer and Christy have presented UAH as more reliable than ground stations for 25 years, whereas the RSS folks like Carl Mears are more realistic … and better at finding bugs at UAH.

Middleton certainly isn’t alone in getting his climate science wrong:

Thanks, Ken. I couldn’t watch it all. It was the usual litany of bogus arguments from what I did see.

I did manage to get through it and I agree, it is really bad.

Sadly, he is one of the luncheon speakers at the GeoConvention in March.