Originally published at Corporate Knights on March 17, 2016.

Sorry Ted Cruz. There’s no conspiracy among scientists to exaggerate global warming by fudging the numbers.

Last year was the warmest year recorded since the measurement of global surface temperatures began in the nineteenth century. The second-warmest year ever was 2014. Moreover, because of the persisting effects of the equatorial Pacific Ocean phenomenon known as El Niño, many experts are predicting that 2016 could set a new annual record. January and February have already set new monthly records, with February half a degree Celsius warmer than any February in history.

This news is deeply unsettling for those who care about the future of the planet. But it is even more upsetting for people opposed to climate mitigation, since it refutes their favourite talking point – that global warming has stalled in recent years.

U.S. Congressman Lamar Smith claims there has been a conspiracy among scientists to fudge the surface temperature records upwards and has demanded, by subpoena, to have scientists’ emails released.

Senator and presidential candidate Ted Cruz recently organized a Senate hearing on the temperature record in which he called upon carefully selected witnesses to testify that calculations of temperature made by satellite observations of the upper atmosphere are superior to measurements made by thermometers at the Earth’s surface.

It’s easy to cherry-pick data in order to bamboozle people. The process of making consistent temperature records from surface measurements and satellite observations is complicated and is easy to misrepresent.

But the fact remains that there are no conspiracies afoot. Here’s why.

On solid ground

Thermometers that measure daily maximum and minimum temperatures are usually housed in Stevenson screens – the familiar white-painted, louvered boxes placed a few feet above the ground. The boxes are ideally positioned away from trees and buildings. Over the years, urbanization may encroach on some weather stations, forcing them to be relocated.

Other times, observation protocols need to be tweaked. For example, afternoon temperature readings in the U.S. have been replaced by morning readings. This change can produce small variations in the average daily temperatures measured. For the sake of consistency, corrections need to be made for these and other effects.

Different approaches can be taken to correcting the temperature records. Some methods look for discontinuities in temperature changes at sites that are not observed by nearby stations. Other methods resample the dataset and consider only the best-sited and most consistent readings. Diverse statistical techniques are applied to estimate temperature trends in areas where there is sparse data, such as the Arctic.

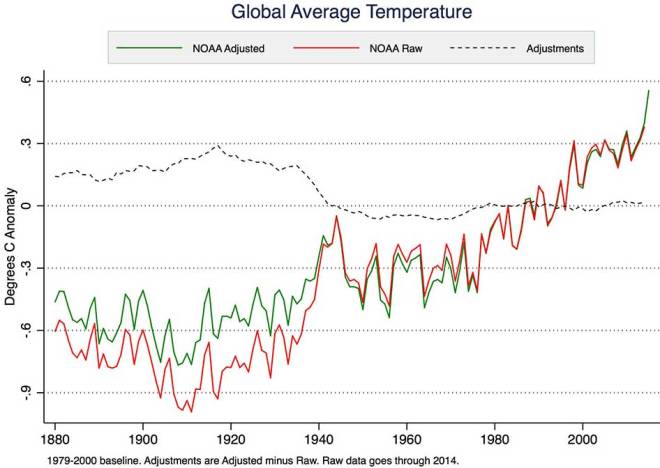

These adjustments, however they are made, tend to slightly increase the warming trends of global land temperatures compared to the raw data. For certain small regions, like the contiguous United States, the corrections can be quite large. Nevertheless, once the corrections are made, the global (land and sea) average temperature records prepared by independent research groups, using different methods, produce remarkably similar results overall.

Sea change

Over the twentieth century, the methods used to measure the temperature at the surface of the ocean have changed. At first, the seawater was sampled using a bucket, hauling it onto the ship’s deck and measuring the water temperature with a thermometer. Because water cools by evaporation during the process, measurements made this way tend to be low. Later on, starting in the 1940s, temperatures were measured at engine-room cooling-water intakes. More recently, a worldwide system of buoys has been deployed. These tend to measure temperatures a little lower than the engine-room intakes do.

The change from bucket to engine-room intake sampling means that the temperatures measured before 1940 need to be adjusted upwards by about half a degree Celsius. This is the largest correction made to any temperature record and, because the oceans cover two-thirds of the Earth’s surface, it decreases the global warming trend observed over the post-industrial period. It’s worth noting here that congressional hearings on climate science have been confined to examining cases where adjustments to the surface temperature measurements increase global warming trends.

The more recent changeover from engine-room intake to buoy measurements requires ocean temperatures recorded over the past 20 years or so to be adjusted slightly to match the earlier measurements. This adjustment was outlined in a 2015 peer-reviewed publication by National Oceanic and Atmospheric Administration (NOAA) scientists led by Thomas Karl.

Although much smaller than the bucket corrections, these adjustments have led Congressman Lamar Smith to subpoena NOAA scientists demanding access to their emails. Needless to say, all methodologies and measurements supporting the study are already in the public domain, consistent with normal scientific disclosure and transparency practices.

The adjustments made to surface temperature measurements are necessary and well documented. The combined effect of all these changes is actually to reduce the rate of surface warming over the past 100 years compared to what we see in the raw temperature data.

Up in the air

Since 1979, it has been possible to calculate a global temperature record of the atmosphere from satellite observations. The satellites do not measure temperature directly, but instead observe the intensity of microwave radiation from gas molecules in the atmosphere. It is possible to tune the observations to focus on a certain depth range below the satellite and then to process the data to improve the resolution. The calculated temperatures that are most commonly cited – the lower troposphere or TLT data – sample a broad band from about 10 km altitude to just above the surface.

Not only is the vertical resolution of satellite measurements not very good, but the process of going from measuring microwave glow and converting those observations to temperatures is complicated, dependent on modelling assumptions and prone to error. The orbits of the satellites decay, resulting in changes to the altitude from which the measurements are taken, as well as changes to the time of day that they are above a particular spot on the Earth.

Significant adjustments have had to be made to the satellite temperature records. Several years ago, the satellite-derived temperatures showed a global cooling trend. However, this was later shown to be an artifact due to changes in satellite orbits that had not been properly corrected. Once the error was noted, the satellite temperature trend showed global warming consistent with the surface temperature observations.

Using satellites to measure temperatures is a little like using an infrared camera to measure a clothed person’s body temperature at a distance. Although a technique like that might be useful for screening, no doctor would rely on it for diagnostic purposes. Instead, they would stick to tried-and-true measurements using well-calibrated thermometers in direct contact with the patient’s body.

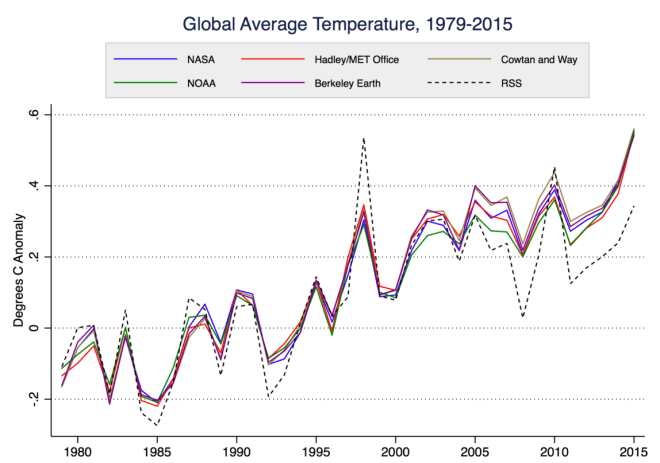

Satellites do, however, provide valuable estimates of the temperature in the upper atmosphere and they deliver global coverage, with only small gaps at the poles. They track the surface measurements quite well on the short-term ups and downs and they reveal a clear upward trend since observations began in 1979. However, starting around 2005, the trends of the satellites and surface measurements have begun to drift apart, with the satellites (RSS in the graph below) calculating anomalies that are a little cooler. Whether this is a real effect of the upper atmosphere warming more slowly than the surface over the past 10 years or if it is a problem with the satellite methodology remains to be seen.

The uncertainty of temperature trends calculated from satellites is about five times as large as the surface temperature measurements. Carl Mears, the lead scientist for Remote Sensing Systems, one of the main groups that process the satellite data, has written:

“A similar, but stronger case can be made using surface temperature datasets, which I consider to be more reliable than satellite datasets (they certainly agree with each other better than the various satellite datasets do!).”

Nevertheless, global warming deniers like to refer to the satellite data as the gold standard. The frequent refrain “no warming since 1998” relies on a double cherry-pick: First off, by choosing 1998, the hottest year ever in the satellite record, as a starting point and, secondly, by disregarding the more reliable measurements of the temperature at the Earth’s surface where we actually live.

In February 2016, Carl Mears and colleague Frank Wentz published a peer-reviewed paper that adjusted upwards the satellite-derived temperatures for the upper atmosphere since 1998 by as much as 0.2 of a degree Celsius. This will remove much of the discrepancy between satellite and surface temperature trends.

Senator Ted Cruz’s recent Senate hearing on the temperature record called carefully selected witnesses who extolled satellite data as the most reliable. Carl Mears was not invited.

Natural thermometers

Canadians may not be inclined to listen to American politicians when it comes to the climate record. But neither do we have to rely exclusively on what the mainstream scientists are telling us: there are natural thermometers that show us what is going on.

Gardeners will be aware that plant hardiness zones have been shifting northwards. Over a 50-year period, there has been a change in three plant-hardiness zones in western Canada and a change of one zone or more in central and eastern Canada.

Amateur hockey players may have noticed that the season for outdoor rinks is shrinking. Fifty years ago, the outdoor hockey season was, on average, 15 days longer than it is today.

Hikers and tourists in western Canada’s mountains can see that the glaciers are in retreat. A 2015 study led by scientists from B.C. universities, published in Nature Geoscience, predicts that by 2100, 70 per cent of the mass of the glaciers in B.C. and Alberta will be gone.

Mythbusting

There’s no doubt that surface temperatures are rising in Canada and almost everywhere else on the globe. The evidence of the instruments and our own observations is clear and consistent. Even as measurements fluctuate a little from year to year because of natural cycles like El Niño, the rising trend of temperatures is unmistakeable.

Denying the existence of a warming planet may be an effective short-term strategy for some politicians, but their interference is hurting the planet long-term.

Thanks to Zeke Hausfather for providing the graphs and to Peter Sinclair for the video.